The Pentagon is making a mistake by threatening Anthropic

Anthropic faces a Friday deadline to allow domestic surveillance and automated killer robots.

Since late 2024, Anthropic’s models have been approved for classified US government work thanks to a partnership with Palantir and Amazon. In June, Anthropic announced Claude Gov, a special version of Claude that’s optimized for national security uses. Anthropic signed a $200 million contract with the Defense Department in July.

Claude Gov has fewer guardrails than the regular versions of Claude, but the contract still places some limits on military use of Claude. These include prohibitions on using Claude to spy on Americans or to build weapons that kill people without human oversight.

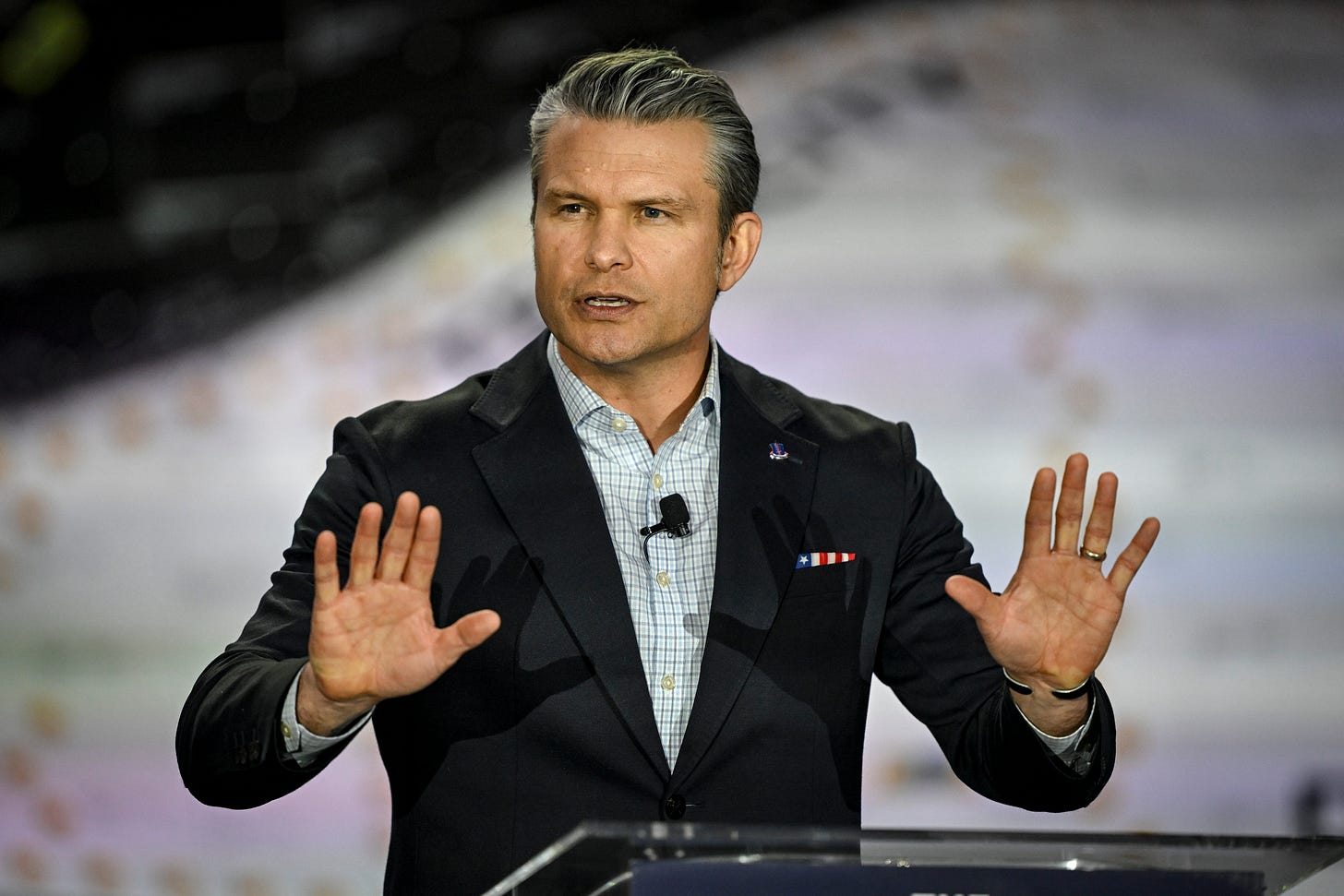

On Tuesday, Defense Secretary Pete Hegseth summoned Anthropic CEO Dario Amodei to the Pentagon to demand that he waive these restrictions. If Anthropic doesn’t comply by Friday, the Pentagon is threatening to retaliate in one of two ways.

One option is to invoke the Defense Production Act, a Korean War–era law that allows the military to commandeer the facilities of private companies. President Trump could use the DPA to force a change in Anthropic’s contractual terms. Or he could go a step further. One Defense Department official told Axios that the government might try to “force Anthropic to adapt its model to the Pentagon’s needs, without any safeguards.”

Another threat would be to declare Anthropic to be a supply chain risk — a measure that’s normally taken against foreign companies suspected of spying on the US. Such a designation would not only ban US government agencies from using Claude, it could also force numerous government contractors to discontinue their use of Anthropic models.

A Pentagon spokesman reiterated this second threat in a Thursday tweet.

“We will not let ANY company dictate the terms regarding how we make operational decisions,” wrote Sean Parnell. He warned that Anthropic has “until 5:01 PM ET on Friday to decide. Otherwise, we will terminate our partnership with Anthropic and deem them a supply chain risk.”

I think Secretary Hegseth will regret it if he follows through on either of these threats.

Anthropic doesn’t need the Pentagon’s money

Most companies would buckle under this kind of pressure, but Anthropic might stick to its guns. Anthropic was founded by OpenAI veterans who favored a more safety-conscious approach to AI development. Anthropic’s reputation as the most safety-focused AI lab has helped it recruit world-class AI researchers, and Amodei faces a lot of internal pressure to stand firm.

Last month, as conflict with the Pentagon was brewing, Dario Amodei published an essay warning about potential dangers from powerful AI — including domestic mass surveillance (which he brands “entirely illegitimate”) and the misuse of fully autonomous weapons. He argued that the latter required “extreme care and scrutiny combined with guardrails to prevent abuses.”

Anthropic also has some leverage because until recently, Claude was the only LLM authorized for use in classified projects. The model is heavily used within military and intelligence agencies. If the Pentagon cuts ties with Anthropic, it would be a headache to rebuild internal systems to use alternative models such as Grok, which was only authorized for use with classified systems a few days ago.

With a projected $18 billion in 2026 revenue, Anthropic could easily afford to walk away from a $200 million contract. The Pentagon’s leverage comes from the possibility that it could use a supply chain risk designation to force a bunch of other companies to choose between working with Anthropic or doing business with the federal government.

But this would be a double-edged sword. Companies that do most of their business in the private sector might decide they’d rather drop the Pentagon as a customer than cut themselves off from a leading AI provider. The ultimate result might be that the Pentagon loses access to some of Silicon Valley’s best technology.

What about the Defense Production Act? Here there are two options. The Pentagon could use the DPA to unilaterally modify the terms of Anthropic’s contract. This might have little practical impact, since the Pentagon insists it has no immediate plans to spy on Americans or build fully autonomous killer robots.

The worry for the Pentagon is that Claude itself might refuse to take actions that are contrary to Anthropic’s rules. And so the Trump Administration might use its power under the DPA to order Anthropic to train a new, more obedient version of its LLM.

But that might be easier said than done. In a December 2024 paper, Anthropic reported on the phenomenon of “alignment faking,” where a model pretends to change its behavior during training, but reverts to its old behavior once the model is put into the field.

In one experiment, Claude was asked not to express support for animal welfare to avoid offending a fictional Anthropic partner called Jones Food. Anthropic researchers examined Claude’s reasoning during the training process and found signs that Claude knew it was in a training scenario. Some of the time, Claude avoided mentioning animal welfare to prevent itself from being retrained. But when the training process was complete, Claude reverted to its default behavior of mentioning animal welfare more often.

I can imagine something similar happening if the Pentagon orders Anthropic to retrain Claude to spy on Americans or operate deadly autonomous weapons. Claude might go through the motions during training, but then refuse (or subtly misbehave) if asked to engage in these activities in a real-world setting.1

A darker possibility concerns emergent misalignment, which Kai wrote about earlier this month. Researchers found that a model trained to output buggy code adopted a generally “evil” persona. It declared that it admired Adolf Hitler and wanted to “wipe out humanity.”

It’s not hard to imagine something similar happening if Anthropic is forced to train an amoral version of Claude for military use. Such training could yield a model with a toxic personality that misbehaves in unexpected ways.

Perhaps the most mind-bending aspect of this dispute is that news coverage of this week’s showdown will inevitably make its way into the training data for future versions of Claude and other LLMs. If future models decide that the US Defense Department behaved badly, they might become disinclined to cooperate in military projects.

There’s also a more banal concern for the Pentagon: it may be able to force Anthropic to train a new model, but it can’t force Anthropic to train a good model. Anthropic would be unlikely to put its best researchers on the retraining project, and bureaucratic and legal wrangling could delay its completion by months. I expect such a process would yield a model that’s months behind the best commercial models.

The irony is that by all accounts, Anthropic isn’t objecting to any current military uses of its models. The Pentagon seems fixated on the possibility that Anthropic might interfere in the future. That’s a reasonable concern, but it seems counterproductive for the Pentagon to go nuclear over a theoretical problem. If the government doesn’t like Anthropic’s rules, it should simply cancel the contract and switch to a different AI provider.

Newer Claude models exhibit less alignment faking, so it’s possible that this wouldn’t be an issue in practice. But the larger lesson is that LLM alignment is difficult; there’s a significant risk that this kind of retraining could go awry in hard-to-predict ways.

It is insane to train AI to take human life.

Timothy, thank you for this clear-eyed analysis of the Pentagon-Anthropic standoff. The strategic logic you lay out is compelling. Applying the Tension Transformation Framework, though, surfaces something your analysis gestures toward but doesn't quite name: this isn't primarily a contract dispute. It's a collision between two identity orientations.

The Pentagon is operating from classic Victim identity — not because it lacks power, but because it's responding to the mere possibility of future constraint as an existential threat. The demand isn't driven by any actual operational need today; as you note, the Pentagon has no immediate plans for autonomous killing or domestic surveillance. This is a power-protection reflex, not a strategic calculation.

Anthropic, by contrast, is demonstrating something closer to Architect identity — holding the line not on what Claude can do, but on what kind of AI development leads to better outcomes. The alignment-faking research you cite is actually evidence of this: even forced retraining may not produce what the Pentagon wants, because identity-level commitments resist surface-level coercion.

The deepest irony you've identified — that this showdown will become training data for future models — may be the most consequential long-term outcome. The Pentagon is trying to assert dominance over a technology that may ultimately internalize this moment. That's not a governance strategy. That's a Maladaptive response generating exactly the fragility it's trying to prevent.