I don’t think we are close to “AI scientists”

Today's AI agents are not designed to extract deep insights from new observations.

In February, my colleague Kai Williams pointed out that LLMs have an uncanny ability to recognize authors based on their unpublished prose. In recent weeks, journalists like Megan McArdle and Kelsey Piper have confirmed this.

I decided to try it out for myself. Back in 2012, a friend paid me $500 to write an essay about the Great Canadian Maple Syrup Heist. It never got published. So on Friday, I opened ChatGPT in incognito mode and pasted in five paragraphs from the essay.

ChatGPT said it wasn’t sure who the author was, guessing that it might be Nate Silver or my former Vox.com colleague Matthew Yglesias. When I added four more paragraphs, the chatbot responded: “This one I can identify pretty confidently—it’s by Timothy B. Lee.”

But when I asked ChatGPT why it thought the essay was written by me, it couldn’t give me a specific reason. “Even though Timothy B. Lee often writes clear, explanatory pieces, there’s nothing here that acts like a fingerprint—no recurring phrases, specific policy framing, or known article structure that ties it definitively to him.”

I think there’s a lesson here that goes well beyond identifying authors.

People have a lot of implicit knowledge — things we know but struggle to fully explain. People often use body-oriented metaphors for this phenomenon. We say that an insight is “on the tip of our tongue,” that we “can’t put our finger on” an idea, or that we know something “in our gut.”

Something similar is true of LLMs: their ability to perform cognitive tasks greatly exceeds their ability to explicitly explain how and why they’re able to perform them.

But there’s an important difference between people and LLMs. The human brain learns constantly; as we go through our day, our brains are constantly making new connections, recognizing new patterns, and forming new hunches. Our stock of implicit knowledge is constantly expanding.

In contrast, LLMs only do this during training. LLMs have an uncanny ability to recognize authors — but only authors whose work was well represented in their training data. Once a model is trained, its weights are frozen and its capacity to learn new patterns (for example, the writing styles of new authors) is greatly reduced.

Recently, there has been a lot of excitement about AI agents like Claude Code and OpenClaw. Much of the hype is justified. Claude Code really is revolutionizing computer programming, and agents like OpenClaw very well might transform other parts of the economy and our daily lives.

Industry leaders expect even bigger changes in the near future. In an interview last month, Sam Altman said that OpenAI is aiming to build an “automated AI researcher” by March 2028. Some people expect this (or similar breakthroughs by rivals) to set off a recursive self-improvement loop that radically accelerates scientific and technological progress.

That might happen eventually, but I think it will take a while.

As human scientists perform experiments, their brains are hunting for patterns in the data that could give rise to new insights and new models of how the world works. But an AI scientist — at least one based on today’s LLMs and agent architectures — can’t learn from experiments in the same rich way. They have no reliable or scalable way to build implicit knowledge from data they see at inference time.

Fixing that may require fundamentally rethinking the transformer architecture at the heart of today’s frontier models. At a minimum, it’s going to require overhauling today’s agentic frameworks.

How agents deal with limited LLM context

Many difficult intellectual tasks require “thinking” for a long time. Yet LLMs can only store a limited number of tokens in their working memory, known as the context window. For leading models, this limit has been stuck around 1 million tokens for the last couple of years. Moreover, due to economic constraints and the problem of context rot (which I wrote about in November), AI developers try to stay well below the maximum.

Managing this tension has been a major focus for the AI industry, which has developed a suite of “context engineering” techniques for using context efficiently. For example, modern chatbots undergo a process of compaction, where older information periodically gets deleted or summarized.

This creates an illusion that the model has much longer context than it actually does. But it can have big downsides if compaction goes awry. In one horrifying incident, a woman asked her AI agent to suggest emails for deletion, but not actually delete them. Unfortunately, that latter request got lost during compaction and so the agent started mass-deleting her emails.

Over the last year, AI companies have experimented with allowing models to store persistent information outside of the context window. Claude Code was a step in this direction. Claude Code runs on the user’s own computer and can read and modify files on the local hard drive. Once Claude Code has finished a particular coding task, it can write the results out to the affected file and no longer needs to keep the details in context.

OpenClaw, released in late 2025, goes a step further. It’s a general framework for running AI agents on a user’s local computer. OpenClaw agents — like Claude Code agents — can read and write files on the local filesystem, allowing them to store relevant documents and keep track of uncompleted tasks.

Enthusiasm for OpenClaw and other local agents has led to surging demand for Apple’s Mac mini computers. Installing OpenClaw on a Mac Mini allows agents to connect to Apple services such as iMessage. At the same time, because macOS is based on Unix, agents have access to a powerful command-line interface called the Unix shell.

“At the end of the day, your agent is just its files”

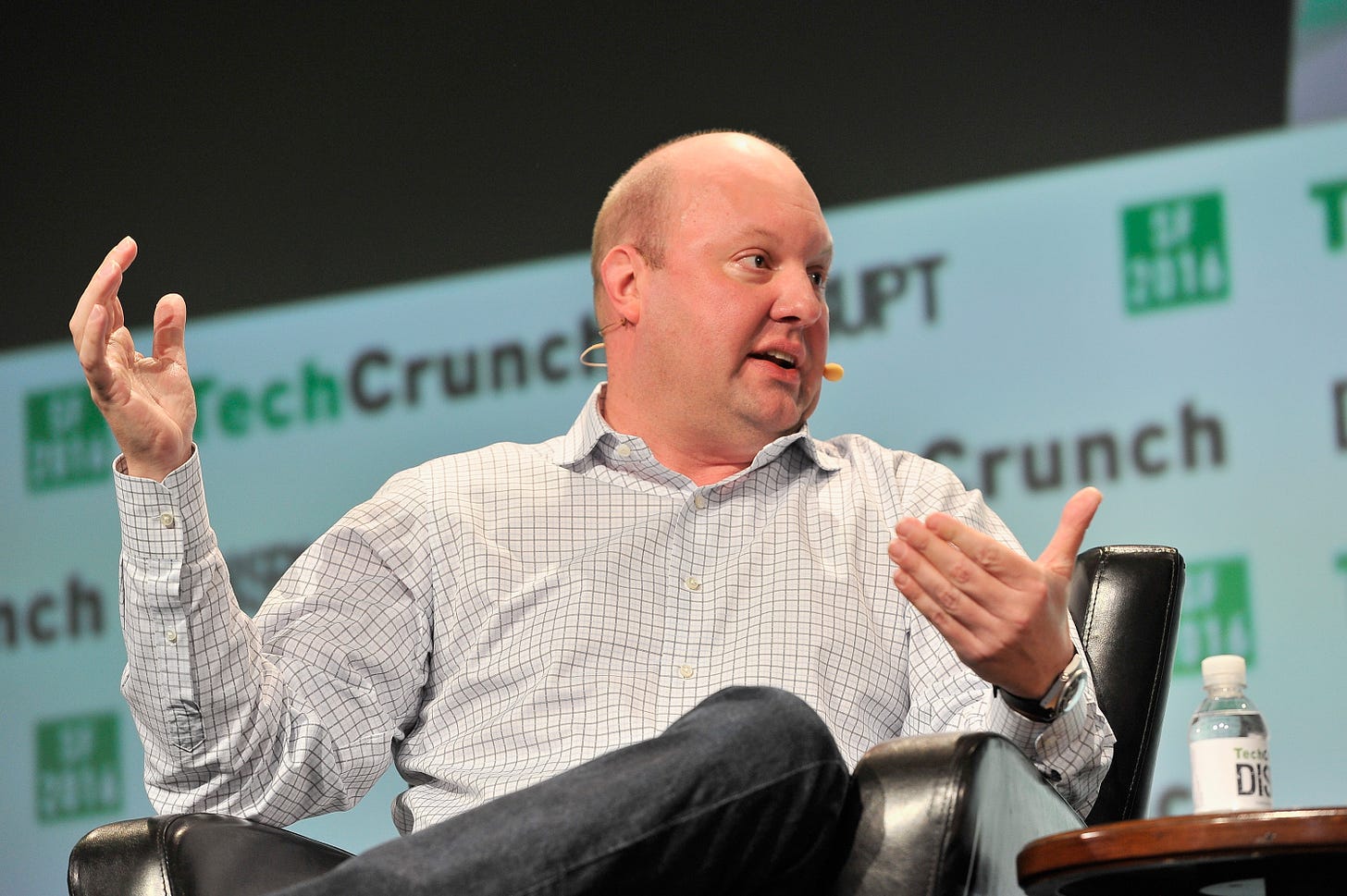

In a recent appearance on the Latent Space podcast, the venture capitalist Marc Andreessen argued that agents like OpenClaw represented an important new computing paradigm. Here’s a lightly edited excerpt:

We now know an agent is the following: It’s a language model. It’s a Unix shell. The agent has access to the shell. Then it’s a file system. The state is stored in files. There’s the Markdown format for the files. And then there’s basically what in Unix is called a cron job — a loop and a heartbeat — and the thing basically wakes up…

So that’s the architecture. And then it turns out, what is your agent? Your agent is a bunch of files stored in a file system.

This means your agent is independent of the model that it’s running on because you can swap out a different LLM underneath your agent. And your agent will change personality somewhat because the model is different, but all of the state stored in the files will be retained. It’s still your agent with all of its memories and with all of its capabilities.

You can also swap out the shell. So you can move it to a different execution environment. You can also switch out the file system. And you can swap out the heartbeat, the cron framework, the agent framework itself. At the end of the day, your agent is just its files.

As a consequence of that, the agent can migrate itself. You can instruct your agent, migrate yourself to a different runtime environment, migrate yourself to a different file system, swap out the language model. Your agent will do all that stuff for you.

The agent has full introspection. It knows about its own files and it can rewrite its own files. And that leads you to the capability that just completely blew my mind when I wrapped my head around it, which is you can tell the agent to add new functions and features to itself.

So you run into somebody at a party and they’re like, oh, I have my OpenClaw do whatever — connect to my Eight Sleep bed and it gives me better advice on sleep. So you go home at night — or there at the party — you tell your OpenClaw, “add this capability to yourself.”

And your claw will say, “okay, no problem.” It’ll go out on the internet and it’ll figure out whatever it needs and then it’ll write whatever it needs and then the next thing you know, it has this new capability. You can have it upgrade itself without even having to do anything other than tell it that you wanted to do that.

This paradigm is only a few months old, so I expect it to evolve significantly over the next couple of years. For example, it’s not obvious whether most AI agents in the future will run on a user’s local computer or whether more people will use OpenClaw-like agents that operate on a virtual machine in the cloud.1 But I think Andreessen is right that this is an important new computing paradigm.

At the same time, Andreessen’s remarks highlight a big reason I remain skeptical that today’s AI models will get us to human-level intelligence. The sentence that jumped out at me was “your agent is just its files.” I think it’s worth unpacking what that implies for their future capabilities.

“Memento” at the office

The 2000 movie Memento features a protagonist who suffers from short-term memory loss. To cope with this, he regularly writes notes providing guidance and instructions to his future self. OpenClaw does something similar — the language model itself periodically resets its context window, but the agent maintains coherence by writing notes to itself.

Here’s an analogy. Suppose you need an employee, but rather than a permanent hire, you get a temp agency to send you a different person each week.

At the end of each week, the worker spends several hours meticulously documenting the week’s work.

Each temp worker comes into the office with general training for their industry and profession. So when they start reading on Monday morning, they only need to learn information specific to this particular job, not background information that would be widely known to others in the same field (LLMs, after all, start with general knowledge from a wide range of fields). They may not have time to read everything their predecessors have written, but the notes are well organized and they can use search tools to quickly find the most relevant documents.

How well would this arrangement work? It depends on the nature of the job. Some jobs — receptionists, pharmacists, plumbers — are fairly transactional. Workers are not expected to maintain much context between appointments, so it wouldn’t matter that a different person is providing the service each week.

But there are other jobs where context matters a lot. Some people work with the same clients over years, developing a deep understanding of their situations and goals in the process. Other jobs require workers to do in-depth research over the course of weeks or months in order to develop new insights.

In jobs like that, it could easily take more than a week’s worth of reading for a new worker to get “up to speed.”

I was an intern at Google in 2010. My first assignment was to add a column to an internal database. This only required a few lines of code. But it took me weeks of reading to learn enough about Google’s systems and development processes to write those lines.

This isn’t unique to programming. In many knowledge-intensive industries, it takes several months (at least) for a new employee to learn enough about a job to begin adding value. Prior to this point, the employee requires so much “hand-holding” that it would be faster for the manager to just do the job herself. In industries like this, it would be a non-starter for workers to cycle out after a week.

Implicit vs. explicit knowledge

I know what critics would say here: A human worker takes hours to read a 100,000-word document. An LLM can do it in seconds. If LLM-based coding agents had existed in 2010, they would not have taken weeks to make a minor change to a Google database.

The speed of LLMs means that one iteration of an OpenClaw-style agent can leave very detailed notes for its successors. It also means that OpenClaw can go through hundreds of iterations of the read-act-write loop in the time it takes a human worker to do it once.

This probably means that OpenClaw agents can accomplish more than my human analogy suggests. Over thousands of iterations they might be able to make progress even on fairly challenging problems.

That’s a fair point as far as it goes, but I think a lot of human jobs will remain out of reach.

Four years ago, I wrote an article about the concept of “greedy jobs” — jobs where workers who put in longer hours tend to make more per hour. There are a number of reasons jobs can be greedy, but a big factor is that knowledge workers often do better work with more experience. The advantages of more experience — greater context — can continue compounding across a multi-decade career.

For example, I’ve been writing about technology and economics for more than 20 years. I’ve written about Brexit, patent trolls, lidar sensors, and many other topics. At any given point in time, most of this knowledge isn’t relevant to whatever I’m writing about. But in the aggregate, it increases the odds I’ll have something interesting to say on any given topic.

It would be completely impractical for me to write down everything I know, hand off my notes to another journalist, and expect her to do my job as well as me. It’s not just that it would take me months to summarize everything I’ve learned over a 20-year career. It’s that I have a lot of implicit knowledge I don’t know how to put into words.

My explicit beliefs — things I’m able to articulate in conversation or write down in an email — are the tip of an iceberg. Below the water line is a much larger set of hunches, vague associations, and half-formed theories. Because this stuff is implicit, it can’t easily be transferred to another person. But it’s essential for me to do my job well.

My publishable epiphanies often start out as hunches. I become convinced that something is true well before I figure out how to prove it. Often I need to “turn an idea over” in my mind for hours or days before I can explain it clearly.

And I don’t think I’m unique. The same seems to be true for scientists, engineers, business leaders, and many other knowledge-based professions. Many insights start out as implicit ideas in people’s heads — or “on the tips of their tongues” — before anyone figures out how to translate them to English, Python, or any other explicit form.

As I discussed earlier, LLMs do have implicit knowledge like this. But most, if not all, of it was learned during their initial training process. LLMs seem to lack a capacity for continual learning: the ability to recognize new patterns in — and form new hunches about — information they encounter at inference time.

Moreover, whatever implicit knowledge an LLM does develop during a particular session is lost when an agent framework hands off control from one LLM instance to the next. During this transition, everything the agent knows gets stored in a set of external files — as Andreessen put it, “your agent is just its files.” By definition, implicit knowledge — knowledge that an agent can’t explain in natural language, code, or other explicit form — won’t survive these handoffs.

And I have a strong hunch that these underbaked thoughts are the raw material people use to fashion original insights about the world. And so I suspect that for at least the next few years, we’re going to need human workers to do our deep thinking for us.

Thanks to Daniel Kagan-Kans, Andrew Lee, Steve Newman, and Nat Purser for giving me feedback on a previous draft of this article.

This is the point Dwarkesh Patel made last year in talking about “continual learning”. And I’m arguing in a talk I’m giving these days that it’s actually very close to the point that Hubert Dreyfus was making about expert systems back in the 1980s (and about a lot of analytic philosophy). It’s true that a lot of intelligence can be reduced to knowledge that can be expressed as sentences in a language. But there are things you need to practice and optimize on and can’t express in words.

Modern LLMs do a great job of improving on expert systems by having a bottom layer that has trained and practiced. All the types of reinforcement learning they’re adding are doing more. But they don’t do any better on your own task than the instructions you can write down unless that task makes it into the reinforcement learning loop for the next model.

Tim, one of the cleanest popular-press articulations of the implicit-knowledge problem I've read, the temp-worker analogy in particular does real work.

One push: the reason a seasoned hunch is trustworthy isn't just that it's pattern-rich. It has carried a consequence. The practitioner has made calls, paid for the wrong ones, and adjusted. That loop gives the hunch its weight. An LLM mid-session has nothing in the loop that bears a cost, which means this isn't a context-window problem, it's a stake problem.

Extended the thought (and where it goes for governance) here: https://substack.com/@jammit1994/p-196711207